Investigating AI videos disguised as real events

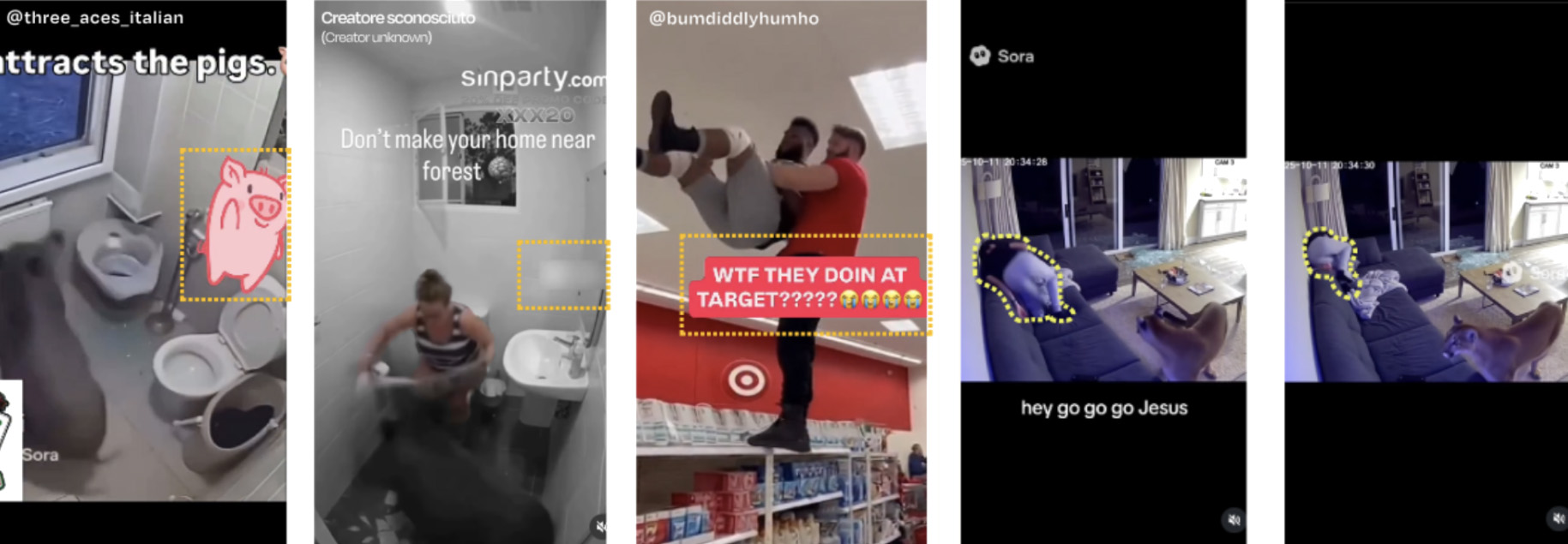

Reality (Un)Rendered is a triptych of posters that highlights visual and contextual clues to identify the use of artificial intelligence in seemingly realistic Instagram reels. The posters are accompanied by a tablet on a table, playing a looped selection of videos to provide viewing context. The project is based on a dataset of 34 Instagram videos collected through keyword searches such as “AI”, “FYP” and “Sora”. Comments referring to AI use or perceived realism were extracted and categorized into eight AI indicators. Frames that best represent each indicator were organized from most evident to most subtle, moving from immediate visual cues to signs that require critical reasoning. The artifact aims to help audiences recognize AI in highly realistic video content.

Artifact design

The exhibition artifact was designed through a combination of data transformation and visual structuring, with the goal of making AI realism patterns legible to a general audience. The initial dataset included 34 Instagram videos, manually collected through keywords such as “AI”, “Sora”, and “FYP”, together with their comment sections. Of these, 10 showed a “Sora” watermark, 11 were explicitly labeled as AI-generated, and 13 had no indication. A subset of more than 300 comments discussing authenticity, realism, or suspicions of AI use was extracted and qualitatively analyzed. Comments were grouped into eight recurring AI indicators and video frames were selected to best represent each of them.

Rather than using automated text analysis, comments were manually grouped into eight recurring AI indicators:

- Creator disclosure: cases where creators signal AI use in captions, hashtags, or by leaving software watermarks visible.

- Repetitive narrative patterns: AI videos often repeat similar plot structures.

- Implausible camera placement: surveillance-like footage with unlikely angles or no realistic operator reaction.

- Unnatural behavior: robotic, illogical, or unusual gestures and actions.

- Visual inconsistency: details that should remain stable changing abnormally frame to frame.

- Rendering artifacts: clipping, abrupt blur effects, or unstable textures.

- Impossible physics: movements and interactions that violate physical laws.

- Implausible scenarios: absurd situations with unrealistic consequences.

Each video was analyzed frame by frame to identify moments that clearly exemplified one or more indicators. Screenshots were captured where inconsistencies, physical impossibilities, or narrative clues were most visible and then arranged side by side. Selected frames were annotated through outlines, highlights, and sequences to direct attention to relevant details. In parallel, comments expressing suspicion, confusion, or recognition of AI were paired with frames to show how audiences verbally interpret these cues.

Frame-by-frame analysis

Visually, the artifact is organized as a triptych of posters, each dedicated to a subset of indicators. Indicators are arranged from explicit to subtle: creator-provided signals (caption, hashtag, watermark), then narrative patterns and camera logic, up to more abstract clues such as broken physics or implausible scenarios. Within each section, frames are shown in short sequences to emphasize inconsistencies over time rather than static snapshots. Cropping, opacity, and highlighting—including orange guide lines— help visitors quickly interpret key details.

Highlight lines guiding visitors’ attention to key details in the screenshots.

A table in the exhibition area introduces the project and provides instructions for interacting with AI video analysis. A tablet plays AI-generated clips on loop, allowing visitors to observe movement before comparing it with annotated frames on the posters. Nearby instructions guide a three-step process: watch the looped clips, examine highlighted indicators, and complete the evaluation booklet. The evaluation asks visitors to identify specific AI cues and visual inconsistencies between frames. This setup encourages active engagement and supports structured practice in distinguishing AI-generated content from real footage.

Exhibition table presenting the project